Share

Today, I was at Intel’s first AI Nervana Summit, held in the UK at the QEII Centre in London, to learn more about Intel’s plans for AI. Intel’s dedicated deep learning solutions were discussed—from their recent acquisition of Nervana and its new dedicated deep learning processors to Saffron and its natural language memory-based reasoning solutions used in predictive analysis.

Intel now has a new, dedicated AI Products group headed up by former CEO of Nervana Amir Khosrowshahi. Amir predicts that dedicated AI instructions will appear on CPUs much like dedicated GPUs are now commonplace on the same SoC (system-on-chip) packages available today.

The key message from those working in AI is that this technology is transformative. The companies and organizations scrambling to get on board operate across a number of industries including health, finance, retail, government, energy, health, transport, industrial and many more.

Consumer applications have been among the most rapid adopters from Amazon’s Echo (enhancing search results is a big area for AI) to predictive data analytics and fraud detection in banking. Intel’s technology is being deployed to solve difficult problems.

AI is affecting people’s lives with medical diagnostic in retinal image processing: the human eye is extremely sensitive to changes in the human body. Diabetes can be detected using convolutional neural networks and early detection rates are increasing thanks to deep learning.

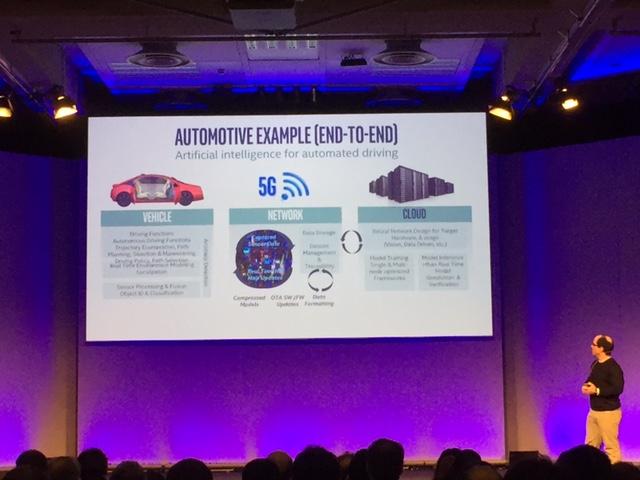

Today, one of the hottest areas of development is in automotive. From in-car speech systems and sensor integration to fully autonomous vehicles, edge computing—combined with deep learning—is moving us closer to the a world where driverless vehicles are a reality.

Data and compute models for deep learning have been around for a while, with work being done since the 1980s. It’s not a new area of research—but increased performance in embedded CPU technology and subtle incremental changes in the performance of networks have enabled applications that were thought impossible just a few years ago.

Altera (now part of Intel) FPGA technology has a place to play here for inference in the embedded space at the edge of the network, with applications including border/airport security, retail (inventory management), robotics and autonomous vehicles. FPGAs are small enough and low power, making them ideally suited to inference in embedded applications.

Intel has announced its first dedicated Deep Learning Inference Accelerator (DLIA) optimized for inference (it will be available Q2 2017). Developed using OpenCL, Intel has a full software stack that removes the need for developers to have knowledge of the internals of the FPGA or the need to learn VHDL.

Intel’s (Nervana) Lake Crest family of dedicated deep learning processors (high power/performance) are designed to be the best in class for AI. This will be an optimized high end CPU with standard Xeon processors still providing great performance when training for more general purpose use cases. These new processors, coupled with the ‘Neon’ Nervana Deep Learning Platform, will make it easy for data scientists to start from the iterative, investigation phase and take models all the way to deployment.